EI论文代写代发范文:Improvements to PI and EI Under Gaussian Noise Premise at Current Optimum

Improvements to PI and EI Under Gaussian Noise Premise at Current Optimum

这是一篇EI国际会议论文代写代发范文,This paper discusses the acquisition function in Bayesian Optimization in machine learningapplications. Based on the traditional acquisition function, the systematic noise between the observationdata and the ground truth is not fully considered. When the noise satisfies the Gaussian distributionassumption, we propose modified acquisition functions for EI and PI respectively. In addition, webelieve that the following perspectives can be used as future work:• When the number of iterations increases beyond a threshold, we should consider using a morecomplex hypothesis space to construct the prediction of unknown points, such as Gaussian mixturedistribution or depth neural network with complex structure.• When calculating the collection function of a point, the information of nearby points shouldbe weighed at the same time, which can be realized by an algorithm similar to random forest, inwhich the nearby points will be assigned to a leaf node.• When the data contains non Gaussian noise, acquisition functions should be constructedcorrespondingly to achieve better balancing the exploration and exploitation, so as to improve theoptimization efficiency.

1. IntroductionMachine learning has achieved phased success. However, almost all machine learning models needto optimize hyper-parameters, such as Neural Networks, Topic Model and Random Forest. In practice,tuning the hyper-parameters includes methods such as Grid Search, Random Search [1], and Gradient-based Optimizations [2]. These mehthods are then designed in order to minimize empirical risk withthe desired efficiency or convergence speed. Bayesian Optimization [3] is acted as a probabilisticapproach that majorly implements Gaussian Process (GP) and utilizes its property of both predictionand uncertainty measure to achieve derivative-free optimization. It can be used when the gradient offunction for optimizing is not accessible.For Bayesian optimization, J Snoek et al. summarizes the applications in the field of machinelearning, and numerical simulation shows that Bayesian optimization has the characteristics of highefficiency and strong convergence [4]. Martin Pelikan further find that hierarchy can be used to reduceproblem complexity in black box optimization [5]. K Swersky et al. extends multi-task Gaussianprocesses to the framework of Bayesian optimization, and aims to transfer the knowledge gained fromprevious optimizations to new tasks in order to find optimal hyperparameter settings more efficiently[6]. J Snoek et al. further explores the use of neural networks as an alternative to GPs to modeldistributions over functions [7].

2. Algorithms

2.1. Gaussian ProcessGaussian process [8] can be considered as a proxy for a black-box function which enablesuncertainty quantification. Gaussian process (GP) is infinite-dimensional multivariate Gaussiandistribution. Covariance matrix of this distribution is defined by kernel functions k(⋅,⋅). Imagining 𝐱forms a finite discretization of input space. Assuming the distribution has zero mean, prior draws 𝐟 canbe simulated:f|𝐱 ∼ 𝒩(0, 𝐊) Statistical assumptions about the GP prior are represented in kernel functions. A commonly adoptedkernel function is Matern kernel [8], where ν controls the smoothness of gaussian process. Let r = 𝐱i −𝐱j, Matern class of covariance function has the following definition:k(r) = 21−νΓ(ν) (√2νrℓ )νKν (√2νrℓ ) with positive parameters ν and length-scale ℓ, Gamma function Γ and modified Bessel function Kν.Smooth GP kernels assumes that if x and x′ are close by, then f(x) and f(x′) have similar values.Given noise observations 𝐲1:n at 𝐱1:n where yi ∼ 𝒩(fi, σy2) . For a new point 𝐱n+1 , the jointprobability distribution is given by(𝐲1:nfn+1) ∼ 𝒩 (𝟎, [𝐊 + σy2𝕀 𝐤(𝐱1:n, 𝐱n+1)𝐤(𝐱n+1, 𝐱1:n) k(𝐱n+1, 𝐱n+1)]) where 𝐊 = 𝐊(𝐱1:n, 𝐱1:n). After applying the rule for conditional gaussians, we can gather the posteriorover function values fn+1|𝐲1:n , which follows a univariate Gaussian distribution𝒩(μ(𝐱n+1), σ2(𝐱n+1)) withμ(𝐱n+1) = 𝐤(𝐱1:n, 𝐱n+1)(𝐊 + σy2𝕀)−1𝐲1:nσ2(𝐱n + 1) = k(𝐱n+1, 𝐱n+1) − 𝐤(𝐱1:n, 𝐱n+1)(𝐊 + σy2𝕀)−1𝐤(𝐱n+1, 𝐱1:n)GP regression estimates the probability distribution of function values on unevaluated points. Foreach prediction location 𝐱∗, mean μ(𝐱∗) gives the best estimate of the function value, and varianceσ2(𝐱∗) models the uncertainty at the point. Acquisition functions utilizes the computed distribution toguide the search for the optimal function value.

2.2. Acquisition FunctionsAcquisition functions take the mean and variance at each unevaluated point as input and compute avalue indicating how favorable it is to sample at this point. It trades off between exploitation andexploration:• Exploitation: looking for locations that minimize the posterior mean μ(𝐱).• Exploration: looking for locations that maximize posterior variance σ2(𝐱)Given the data C = [(𝐱1, y1), ⋯ , (𝐱t, yt)] observed, the next point 𝐱t+1 is chosen by the ranking thevalue returned by acquisition function at candidate points.𝐱t+1 = arg min𝐱a(𝐱|C) ()Acquisition functions is defined as the expected utility u at the unevaluated location 𝐱:a(𝐱|C) = 𝔼(u(𝐱, y)|𝐱, C)= ∫ u(𝐱, y) p(y|𝐱, C) dy ()The probability p(y|𝐱, C) here is gathered from posterior distribution 𝒩(μ(𝐱), σ2(𝐱)) calculated byGP regression.

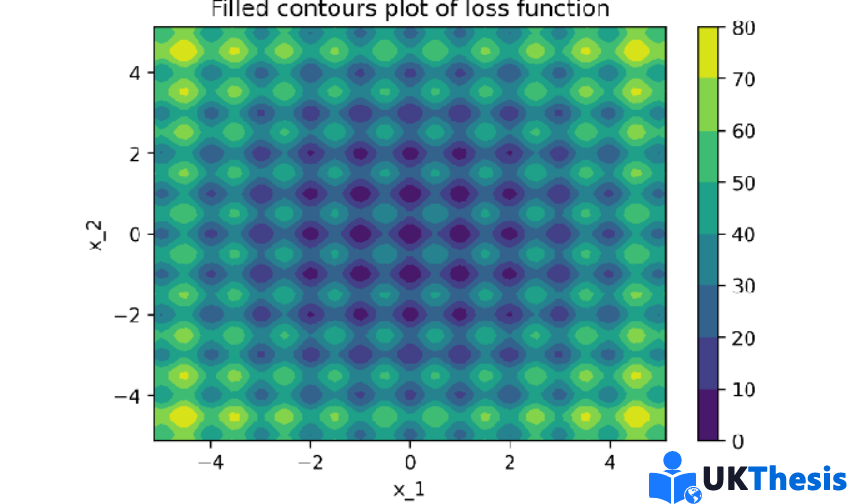

3. ExperimentsPerformance of modified versions of PI and EI are compared with the traditional PI and EI on 3selected 2D benchmark functions. Variables including kernel functions, kernel parameters and positionof pre-samplings are controlled to be the same for each set of experiment. We will visualise samplingposition and global optima in search space, and current minimal loss at each iteration. Performance of4 acquisition functions on each benchmark function will be discussed by sections.

Figure 1 shows the contour of this function. Sampling locations and loss in 45 iterations for 4acuqisation functions are in table 1, where star points represents the global optima and blue points arethe sampling locations. We will compare acquisition functions in pair. PI performed competitively inthe given environment, its sampling trajectory to the minima almost follows the gradient direction. Afterit reaches the global minima, it only sample the locations close to it. MPI shows similar performance,the difference is that it takes longer time (more iterations) to reach optima, and it will occationally jumpout and sample locations far from current minima. EI and MEI puts more leverage at exploration side.Both of them will search globally before start to exploit near to loss optimum. Unlike EI, MEI convergesfaster after it had sampled locations close to global minima, it does not frequently jump out andsearching locations far from current optima.

4. ConclusionsThis paper discusses the acquisition function in Bayesian Optimization in machine learningapplications. Based on the traditional acquisition function, the systematic noise between the observationdata and the ground truth is not fully considered. When the noise satisfies the Gaussian distributionassumption, we propose modified acquisition functions for EI and PI respectively. In addition, webelieve that the following perspectives can be used as future work:• When the number of iterations increases beyond a threshold, we should consider using a morecomplex hypothesis space to construct the prediction of unknown points, such as Gaussian mixturedistribution or depth neural network with complex structure.• When calculating the collection function of a point, the information of nearby points shouldbe weighed at the same time, which can be realized by an algorithm similar to random forest, inwhich the nearby points will be assigned to a leaf node.• When the data contains non Gaussian noise, acquisition functions should be constructedcorrespondingly to achieve better balancing the exploration and exploitation, so as to improve theoptimization efficiency.